We’re excited to announce that the first fully deployable MVP version of the Grey Hat Labs Platform is now operational and available for internal testing. This marks a major milestone for the team—the first time the full multi-service system is running end-to-end in a production-like environment.

What We Accomplished

Over the course of this sprint, the team delivered:

🚀 A Cloud-Hosted, Secure Demo Environment

• The full GHL microservice architecture (schema-engine, rlie, kgf, orchestrator) is now deployed on AWS EC2.

• The environment is fully isolated, HTTPS-enabled, and accessible via a dedicated domain.

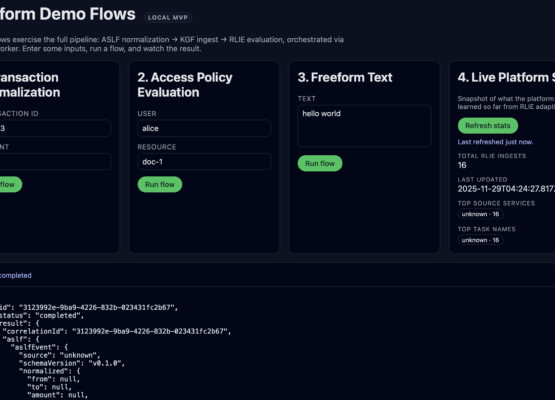

• External testers can now interact with the platform through a polished demo frontend.

⚙️ Robust CI/CD & Deployment Automation

• GitHub Actions now builds, tests, bundles, and publishes SHA-versioned Docker images to AWS ECR.

• A unified deployment script (deploy.sh) lets us roll out updates with a single command.

• All infrastructure steps are fully documented so future team members can deploy confidently.

🧩 Stability, Migrations & Service Interoperability

• Fixed cross-service environment variable inconsistencies.

• Standardized ports, networking, and service-to-service URLs.

• Ensured all services compile cleanly, include proper dist bundles, and run identically locally and in staging.

🔐 Production-Grade Networking

• Added an Nginx reverse proxy for routing and TLS termination.

• Implemented Let’s Encrypt certificates for secure external access.

• Ensured non-public endpoints remain internal and protected.

📘 Documentation

• Created a complete EC2 Deployment Runbook.

• Updated .env.example, docker-compose files, and internal service docs.

• Prepared everything for the next phase: user validation.

What’s Next

With the MVP deployed, we are shifting focus toward:

• Guided demo sessions

• User validation and structured feedback

• Iterating on the admin tooling and access controls

• Exploring hosted options for scalable production deployment

This milestone proves the architecture works end-to-end and sets the stage for rapid iteration and onboarding of early users.